DATA 622 Meetup 11: Interpretable ML

George I. Hagstrom

2026-04-13

Data Science Seminar Tomorrow!!!

Data Science Seminar Tomorrow!!!

- Daniel Lee

- VP Of Sports Analytics at PyMC Labs

- Sports Analytics

- Election Forecasting

- Probabilistic Programming

Data Science Seminar Tomorrow!!!

HW 4 Comments

- Cross Validation with Linear Models

HW 4 Comments

- Cross Validation with Linear Models

- Not wrong, but you can do ‘loo-cv’ here for free

HW 4 Comments

- Cross Validation with Linear Models

HW 4 Comments

- Different \(\alpha\) for weighted and unweighted regression

HW 4 Comments

- Different \(\alpha\) for weighted and unweighted regression

HW 4 Comments

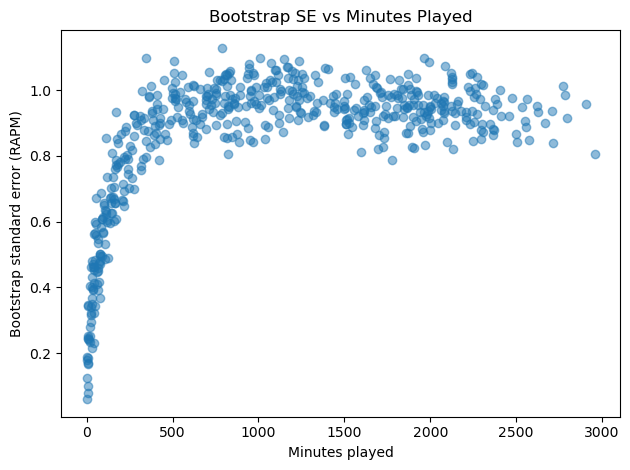

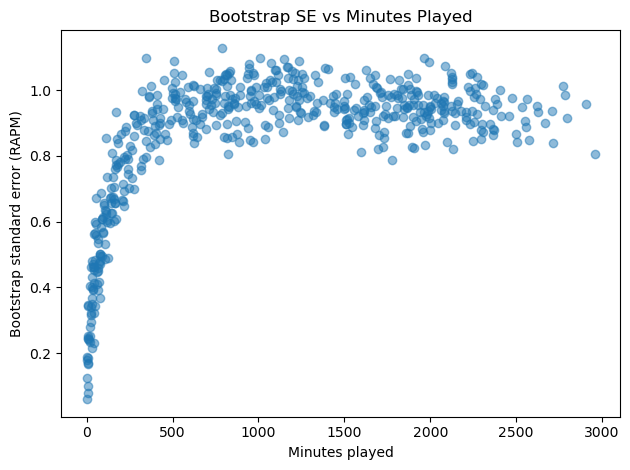

- Interpreting Graphs

- Bootstrap Uncertainty vs Minutes Played

HW 4

- Interpreting Graphs

- Bootstrap Uncertainty vs Minutes Played

Is uncertainty is highest for low minute players?

Week Summary

- Reading:

- Coding Vignette on Causal ML

- MVP Demos: contact me

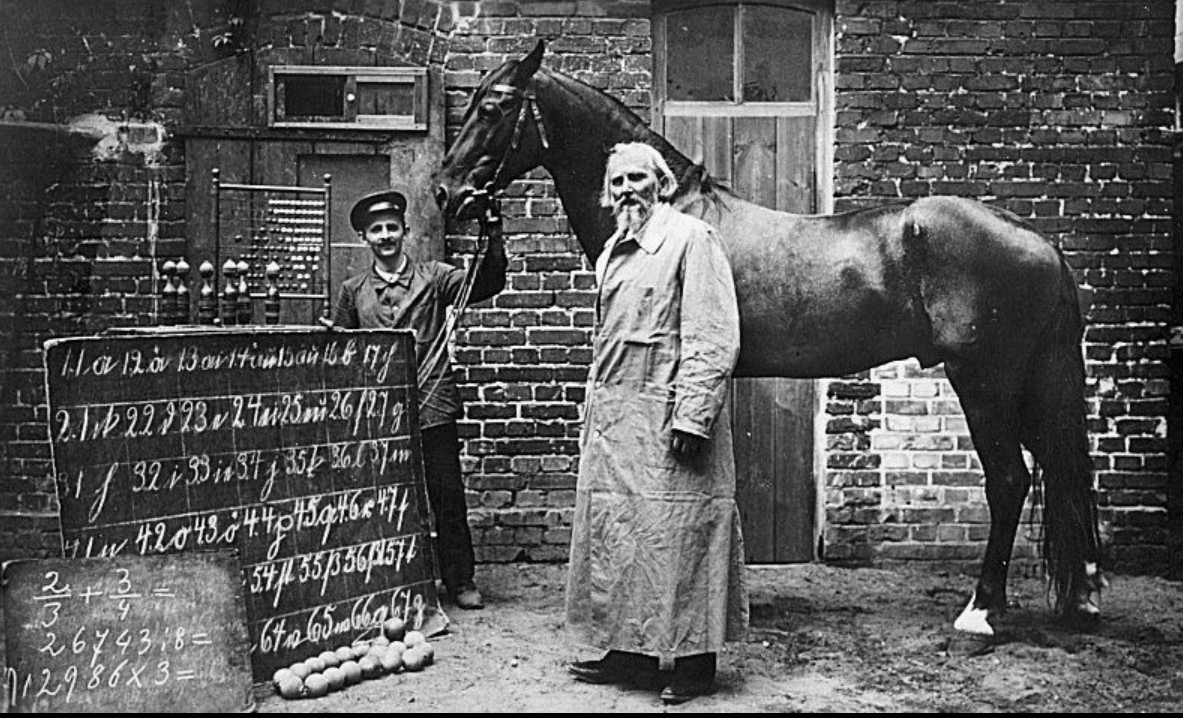

Black Boxes Gone Wrong

- Clever Hans: Horse that could add

wikipedia

Black Boxes Gone Wrong

- Dog vs. Wolf

- Image classifier trained on images of dogs and wolves used the presence of snow as primary means of determining class

Black Boxes Gone Wrong

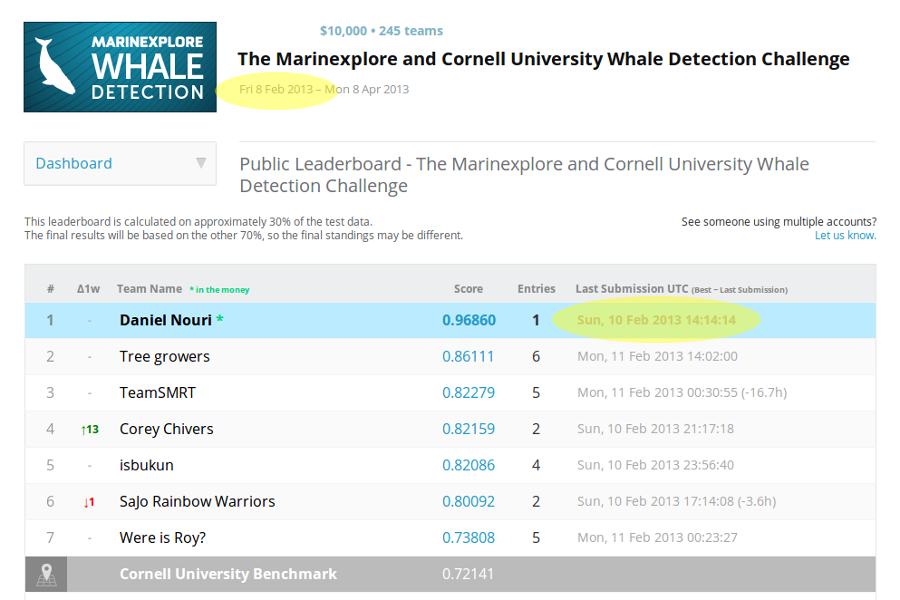

- Identifying Whales in Hydrophone Recordings

- Cornell University held a contest to identify Right Whales

![]()

Black Boxes Gone Wrong

- Identifying Whales in Hydrophone Recordings

- Cornell University held a contest to identify Right Whales

- Winner had astonishingly high accuracy

Black Boxes Gone Wrong

- Identifying Whales in Hydrophone Recordings

- Cornell University held a contest to identify Right Whales

- Winner had astonishingly high accuracy

- Algorithm worked by taking advantage of recording metadata

Black Boxes Gone Wrong

- Identifying Whales in Hydrophone Recordings

- Recordings without whales always had a length rounded to 10s of milliseconds

- Enough to get AUC of 0.945

- After removing data leakage, AUC dropped from 0.99+ to 0.59

When Interpretability?

- Sometimes we do not care about the reason for a prediction:

- Low Stakes

- Very highly vetted (OCR, modern image recognition, etc)

When Interpretability?

- Sometimes the reasons are critical

- Detecting pedestrians or cyclists in self-driving cars

When Interpretability?

- Sometimes the reasons are critical

- Extending or denying credit or loans

- Making a decision: Will customer churn because we talked to them too much or too little?

- High $ value decisions with stakeholders

What is Interpretability?

- Biran and Cotton 2017:

Interpretability is the degree to which a human can understand the cause of a decision.

Interpretability Features

Interpretability is Contrastive

You want to explain a certain prediction or decision was made instead of an alternative

How would my odds of approval change if I had less credit card debt?

Interpretability Features

Interpretability is Selective

You want to focus on the most important few features, not every single feature

Interpretability Features

Interpretability is Social

What counts as a good explanation depends on who you are talking to:

- Data Scientist Colleague

- Regulator

- Non-technical manager

- Customer

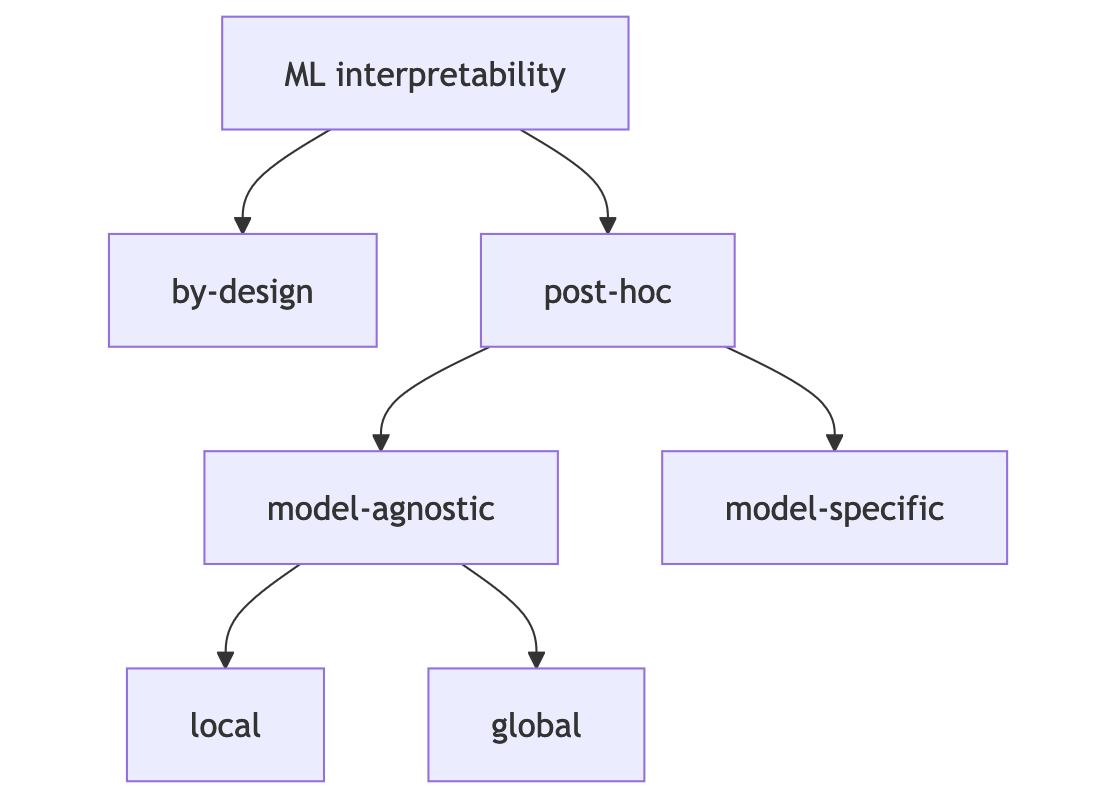

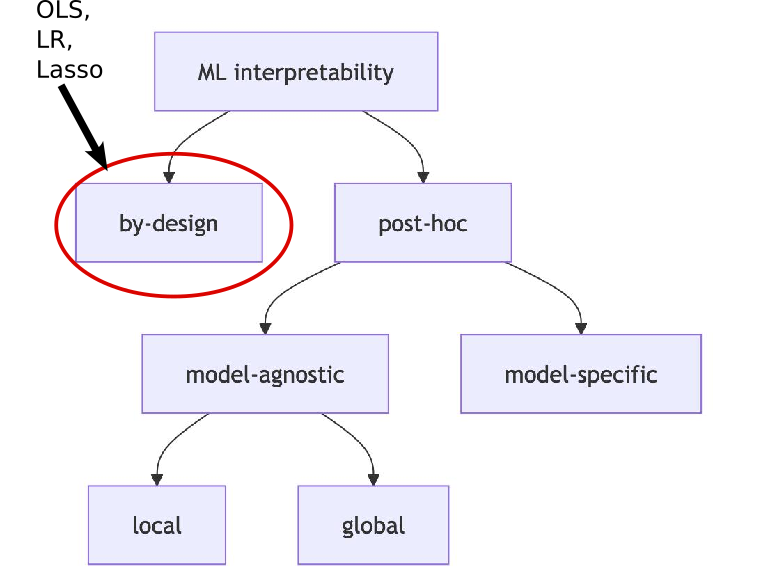

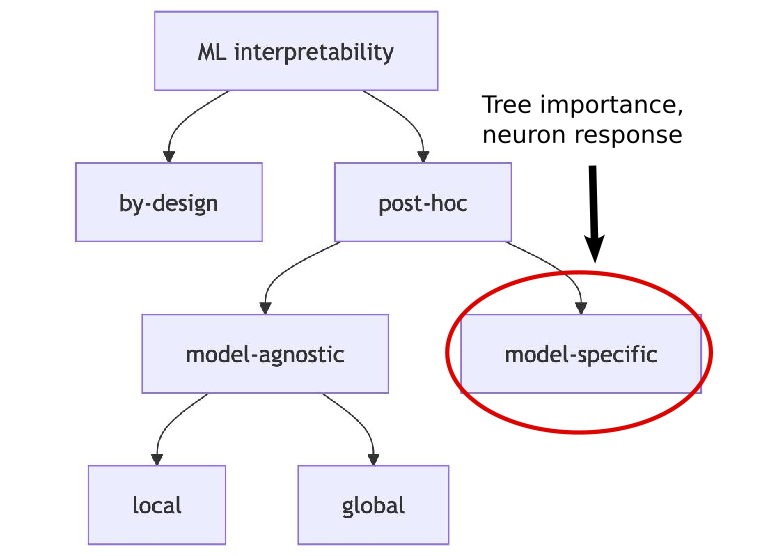

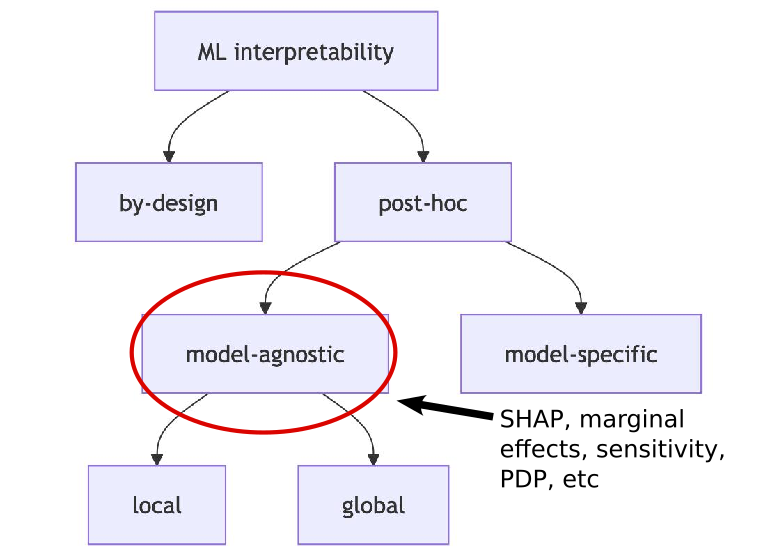

Interpretability Methods

ML Interpretability

Interpretability Methods

ML Interpretability

Interpretability Methods

ML Interpretability

Interpretability Methods

ML Interpretability

Post-Hoc Model-Agnostic Ingredients

Everything uses SIPA:

- Sample from the data

- Intervene on some variables

- Predict the outcome

- Aggregate the results

Bikeshare Example

- Washington DC Hourly Bike Share Data

- Target is ‘cnt’ variable

- Features are weather, type of day, time of day, etc

| season | yr | mnth | hr | holiday | weekday | workingday | weathersit | temp | atemp | hum | windspeed | cnt | cnt_2d_bfr | days_since_2011 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 47 | 1 | 0 | 1 | 0 | 0 | 1 | 1 | 1 | 0.22 | 0.1970 | 0.44 | 0.3582 | 5 | 985.0 | 2.0 |

| 48 | 1 | 0 | 1 | 1 | 0 | 1 | 1 | 1 | 0.20 | 0.1667 | 0.44 | 0.4179 | 2 | 985.0 | 2.0 |

| 49 | 1 | 0 | 1 | 4 | 0 | 1 | 1 | 1 | 0.16 | 0.1364 | 0.47 | 0.3881 | 1 | 985.0 | 2.0 |

| 50 | 1 | 0 | 1 | 5 | 0 | 1 | 1 | 1 | 0.16 | 0.1364 | 0.47 | 0.2836 | 3 | 985.0 | 2.0 |

| 51 | 1 | 0 | 1 | 6 | 0 | 1 | 1 | 1 | 0.14 | 0.1061 | 0.50 | 0.3881 | 30 | 985.0 | 2.0 |

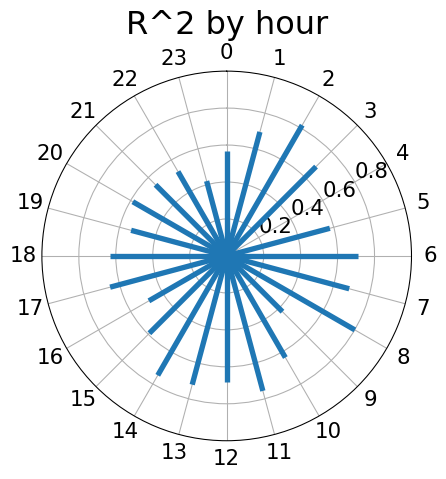

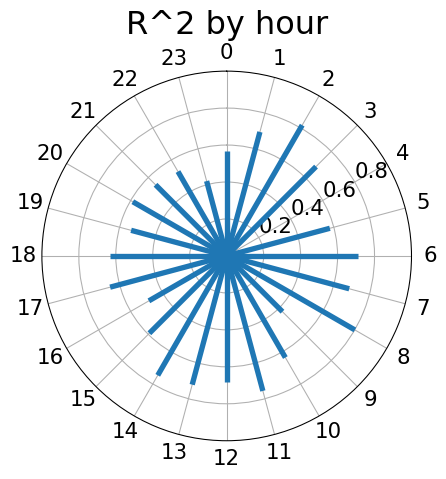

Bikeshare Model

- How does our Random Forest Perform?

RMSE: 81.1

\$R^2\$: 0.864- Not awful, but this is very lazy

Bikeshare Model by Hour

- Let’s look at the predictive accuracy by hour

Bikeshare Model by Hour

- Let’s look at the predictive accuracy by hour

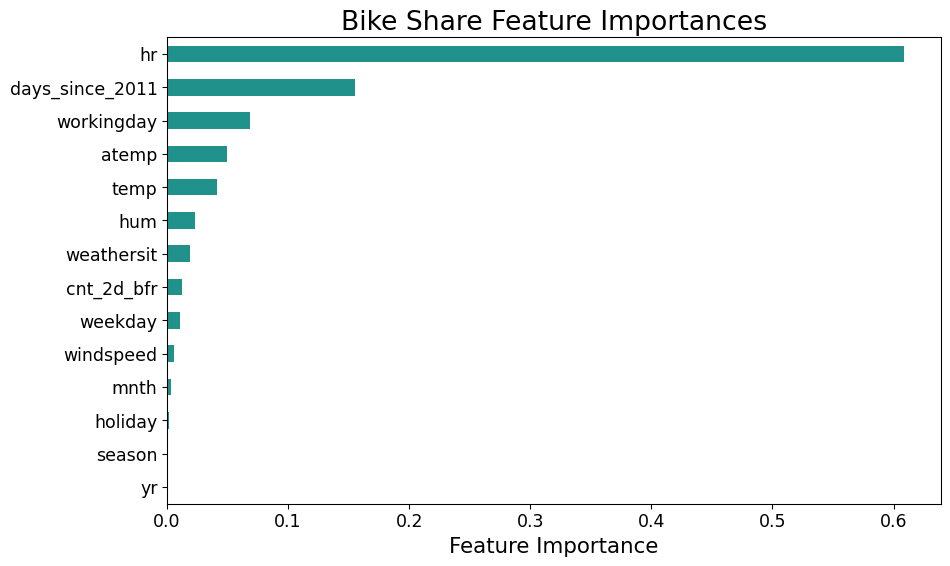

Tree Importance

Flaws of Tree Importance

- Just tells us magnitude

- No direction

- No interactions

- No local dependence

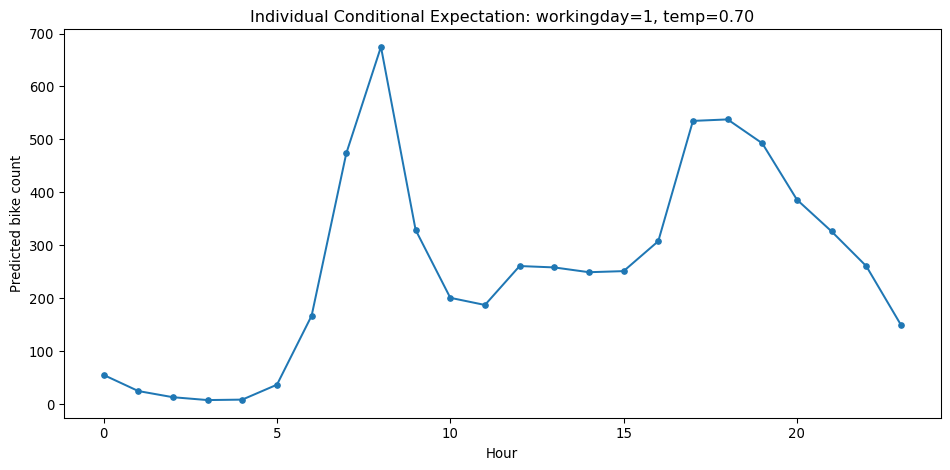

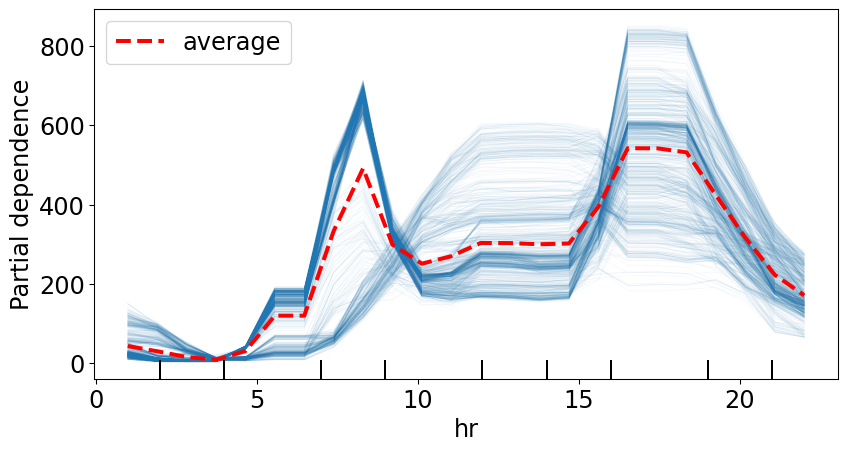

Local Versus Global

- Local looks at the impact of features at a specific data point.

- Ceteris Parabis plots (or “Slope” or “Marginal Effect”):

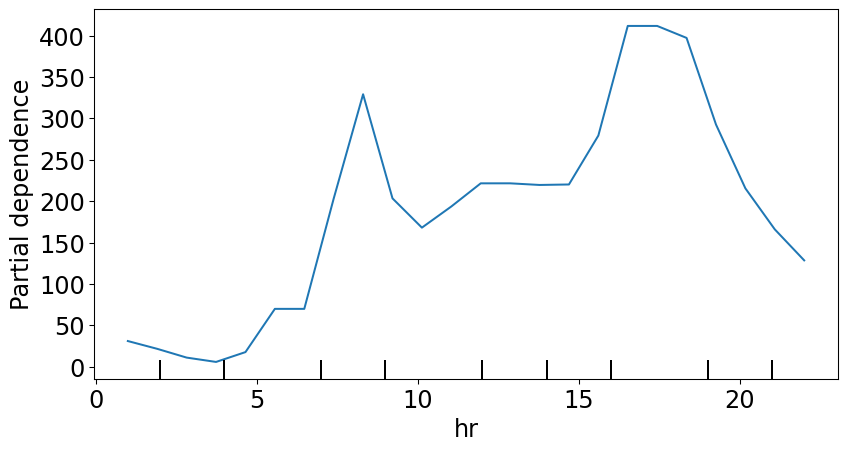

Local Versus Global

- Global looks at the impact of the feature averaged over many instances

- Partial Dependence Plot averages all of the Conditional Expectation Curves

Local Versus Global

- Global looks at the impact of the feature averaged over many instances

- Partial Dependence Plot averages all of the Conditional Expectation Curves

Local Versus Global

- Global looks at the impact of the feature averaged over many instances

- Partial Dependence Plot averages all of the Conditional Expectation Curves

SHAP

- SHAP is a newish tool that is complex but has some very nice additional features:

- Divides attribution additively between the features for each prediction/decision

- Can easily make comparisons (Why did you approve person A instead of Person B)

- Some very good theoretical properties!

Shapley Values

- The idea of Shapley is based on averaging lots of conditional expectations:

- Target feature is \(x_t\)

- Conditional features are \(\mathbf{x}_c\)

- Look at: \[ E(\hat{f}| x_t, x_c) - E(\hat{f}| x_c) \]

- Suppose we know the features \(\mathbf{x}_c\). How much does knowing \(x_t\) change the expeced prediction?

Shapley Values

- The idea of Shapley is based on averaging lots of conditional expectations:

- Target feature is \(x_t\)

- Conditional features are \(\mathbf{x}_c\)

- Look at: \[ \Delta_t(\mathbf{x}_c) = E(\hat{f}| x_t, x_c) - E(\hat{f}| x_c) \]

- Suppose we know the features \(\mathbf{x}_c\). How much does knowing \(x_t\) change the expected prediction?

Shapley Values

- To get the Shapley value, we average the \(\Delta_t(\mathbf{x}_c)\) over all possible combinations of features \(c\): \[ \phi_t(\mathbf{x}) = \frac{1}{N_c} \sum_{c} \Delta_t(\mathbf{x}_c) \]

- Combinations of features are called “coalitions”

- For each data point and each feature get a “contribution”

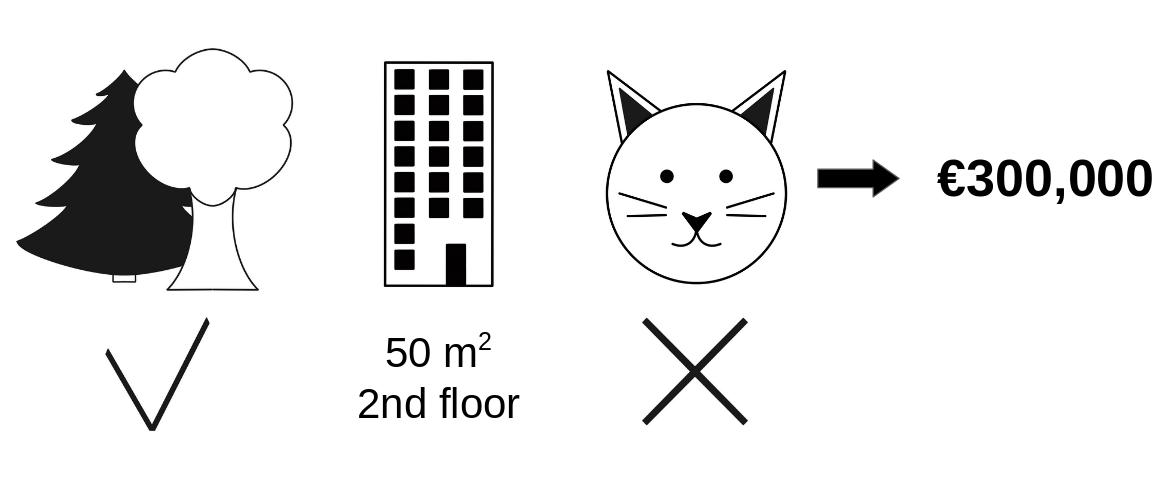

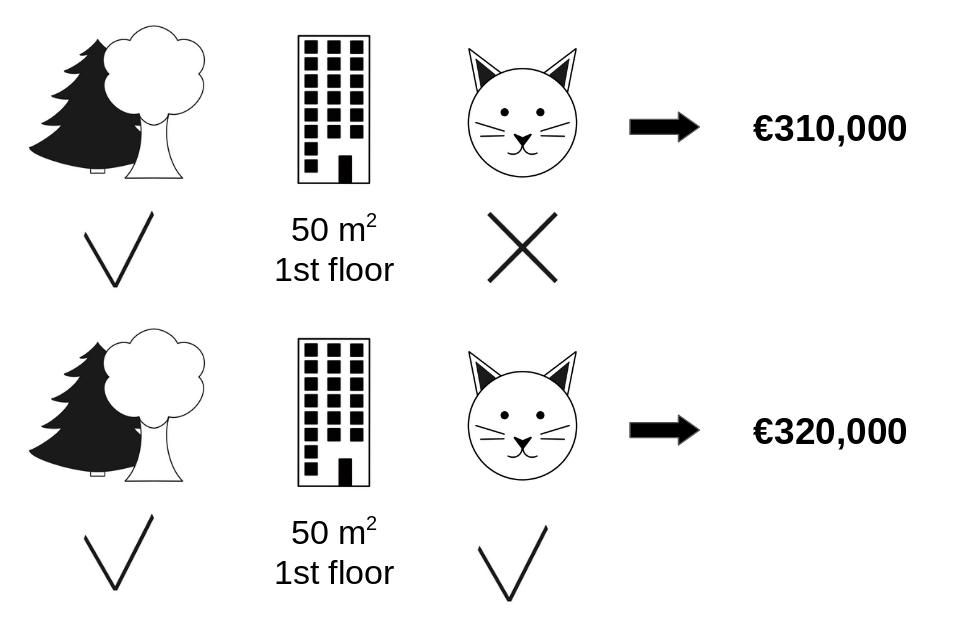

Shapley Example

- Consider an apartment pricing model

- Variables are:

- Apartment size

- floor level

- Can you have a cat as a pet?

- Predicted sales price

Shapley Example

- Our data point is 50\(m^2\), 1st floor, bans cats, near parks

molnar 17

Shapley Example

- Consider full coalition

- Just look at difference between ban cats and not

molnar 17

Shapley Example

- What if cat ban was continuous, like floor area instead?

- Then you average over model predicitions of all floor areas….

- Technically, you do this by resampling from your training data

- Same goes for when area is in your coalition

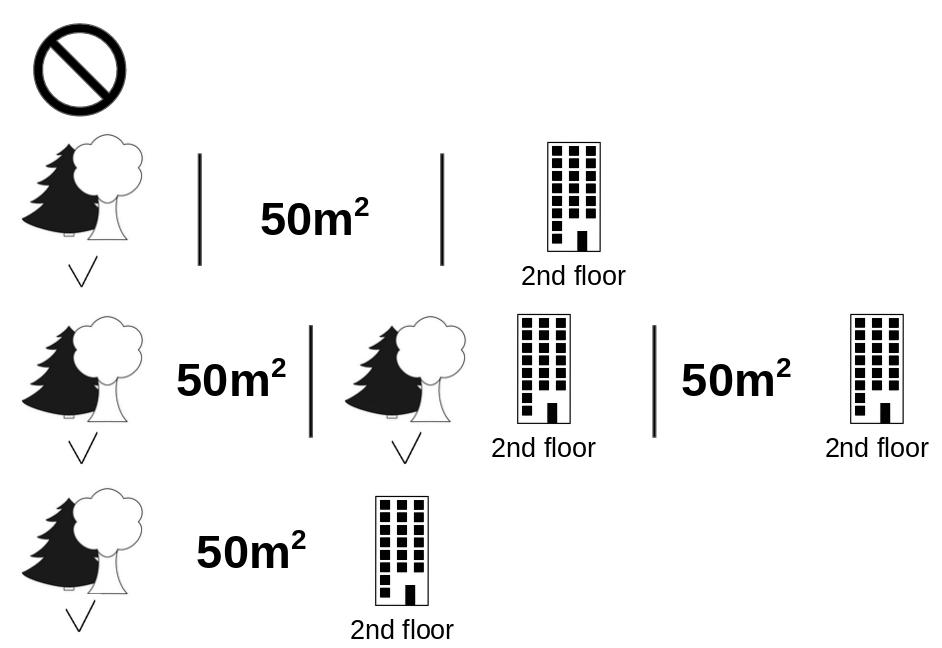

Shapley Coalitions

- Here are all the coalitions behind a single shapley value

SHAP

- Overall this is called Shapley Additive ExPlanations or SHAP

- Big challenge: Too many possible coalitions

- Need Approximate algorithms:

- Monte Carlo

- KernelShap

- TreeShap

- Others…..

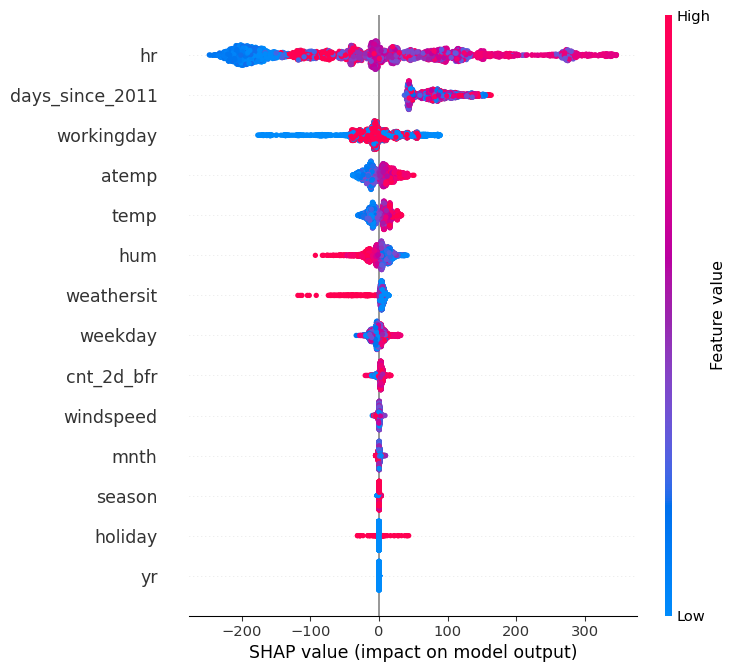

SHAP Importance

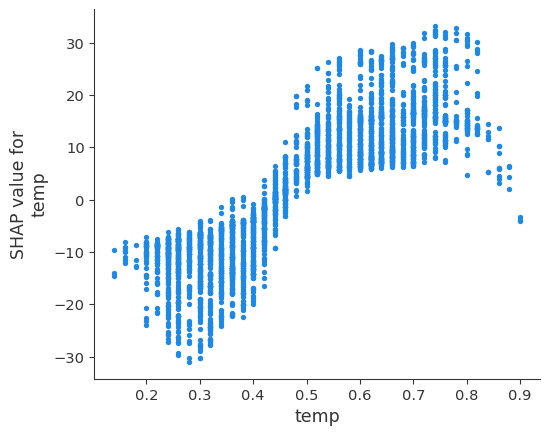

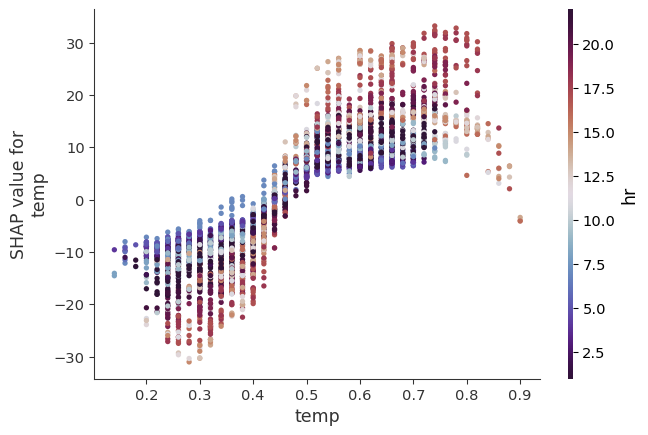

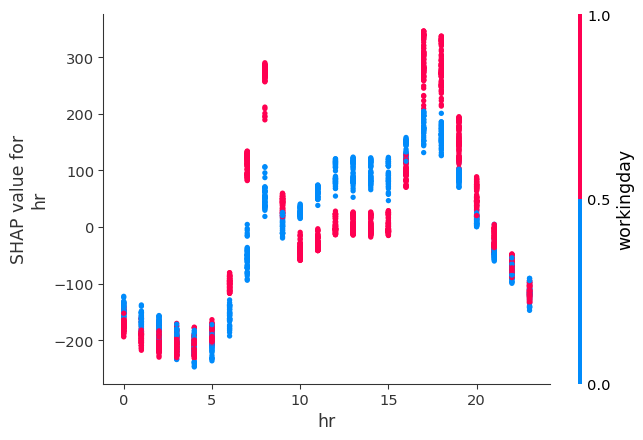

SHAP Dependence Plot

Interactions by default

SHAP Interactions Plot

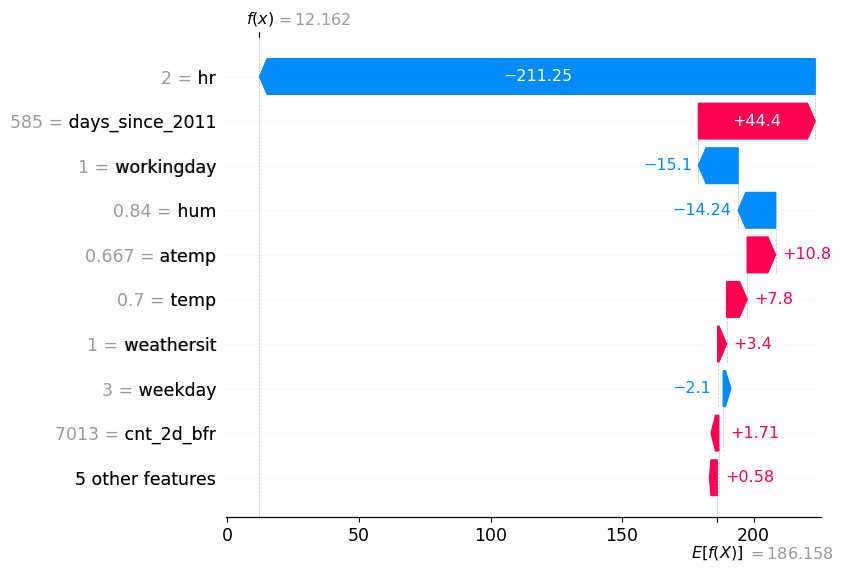

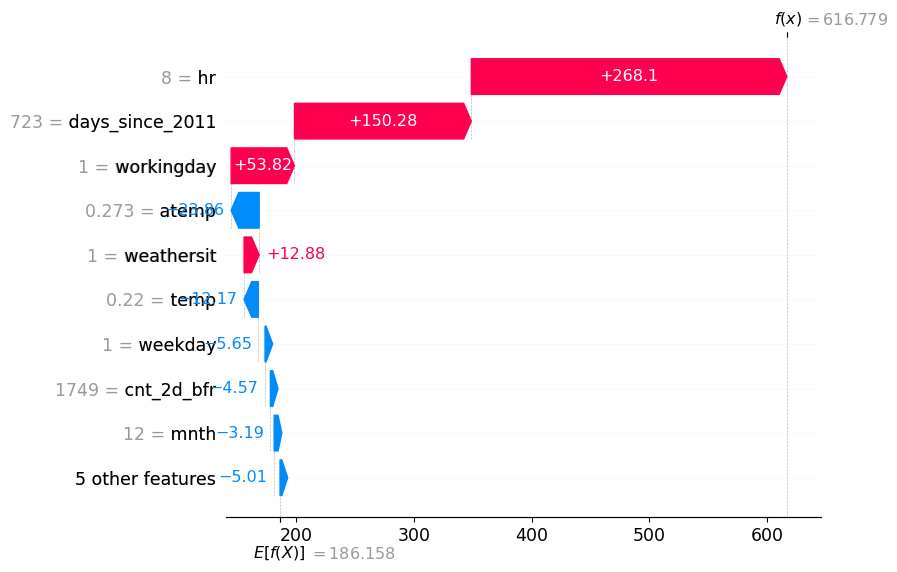

Shap Waterfall Plot

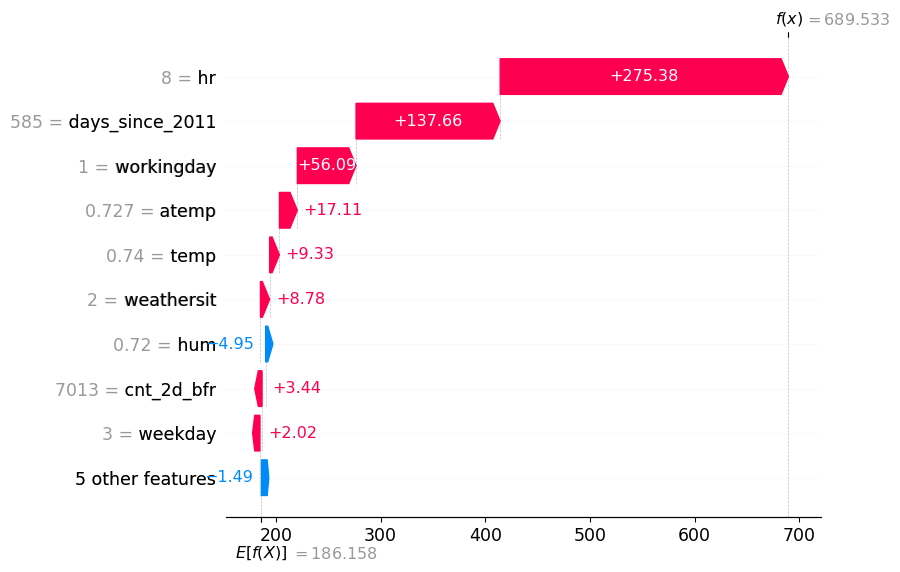

Shap Waterfall Plot v2

Shap Plot for the worst prediction

2012-12-27

Thanks!

DATA 622