| Location | Loc | Population | Marriage | Divorce | WaffleHouses | South | |

|---|---|---|---|---|---|---|---|

| 0 | Alabama | AL | 4.78 | 20.2 | 12.7 | 128 | 1 |

| 1 | Alaska | AK | 0.71 | 26.0 | 12.5 | 0 | 0 |

| 2 | Arizona | AZ | 6.33 | 20.3 | 10.8 | 18 | 0 |

| 3 | Arkansas | AR | 2.92 | 26.4 | 13.5 | 41 | 1 |

| 4 | California | CA | 37.25 | 19.1 | 8.0 | 0 | 0 |

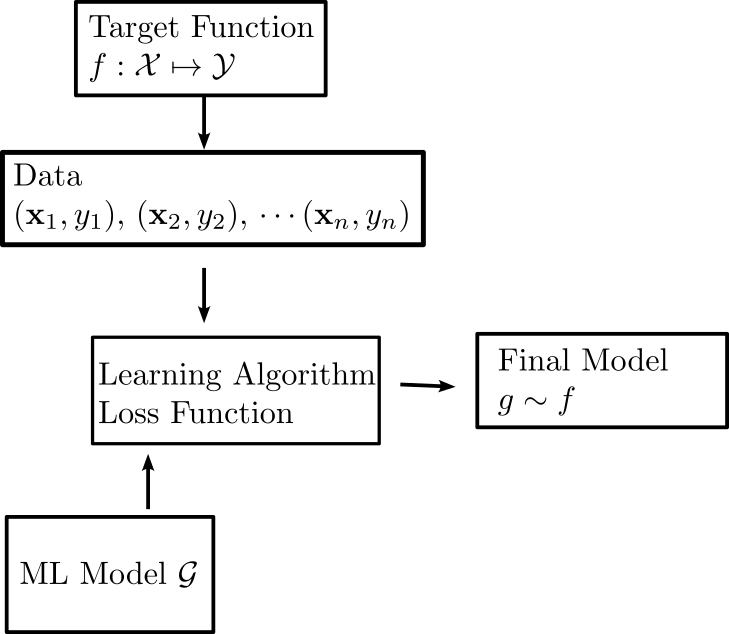

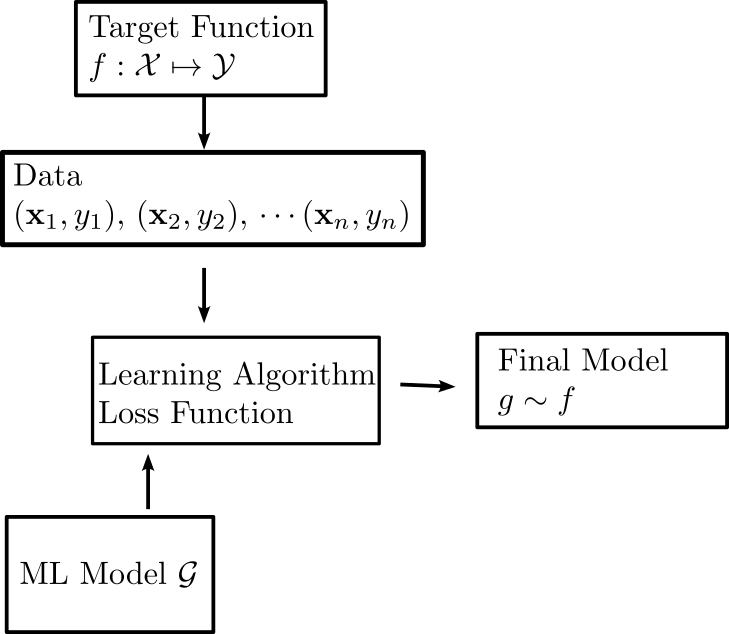

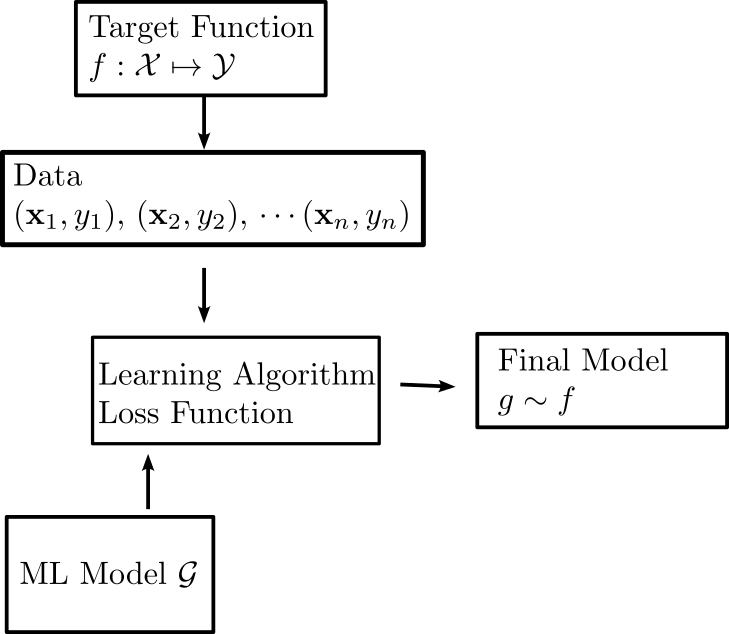

DATA 622 Meetup 3: The Linear Model

2026-02-09

Week Summary

- HW 2 on Linear Regression is due Next Sunday at midnight

- Post/Contact others about project

- Reading: Chapter 3 of ISLP

- One coding vignette on

statsmodels

nyhackr meetup Feb 17!

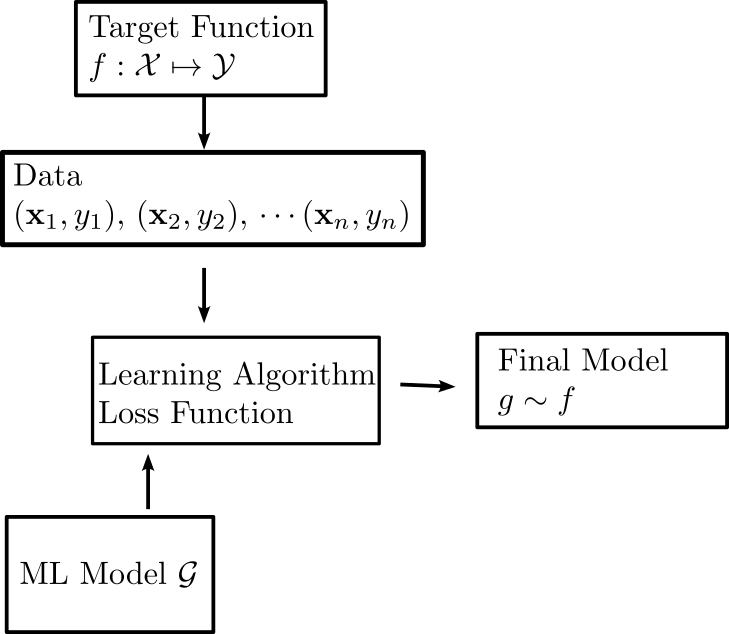

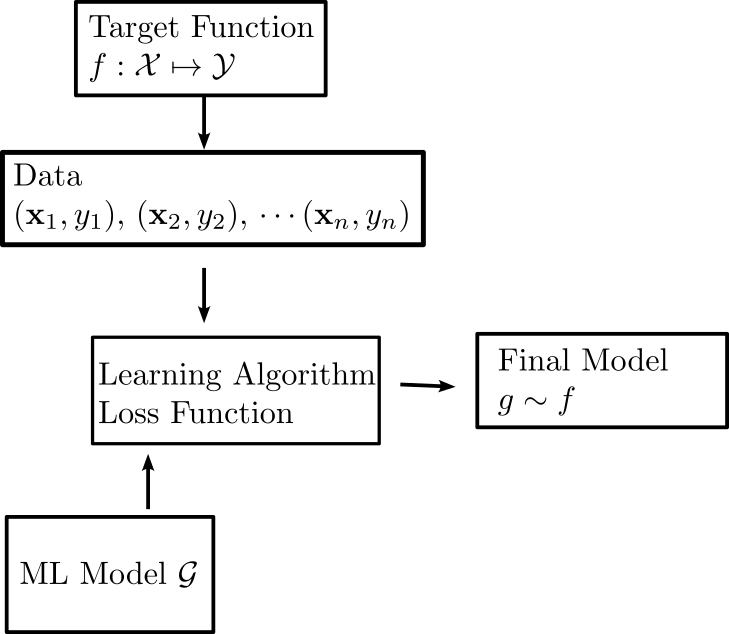

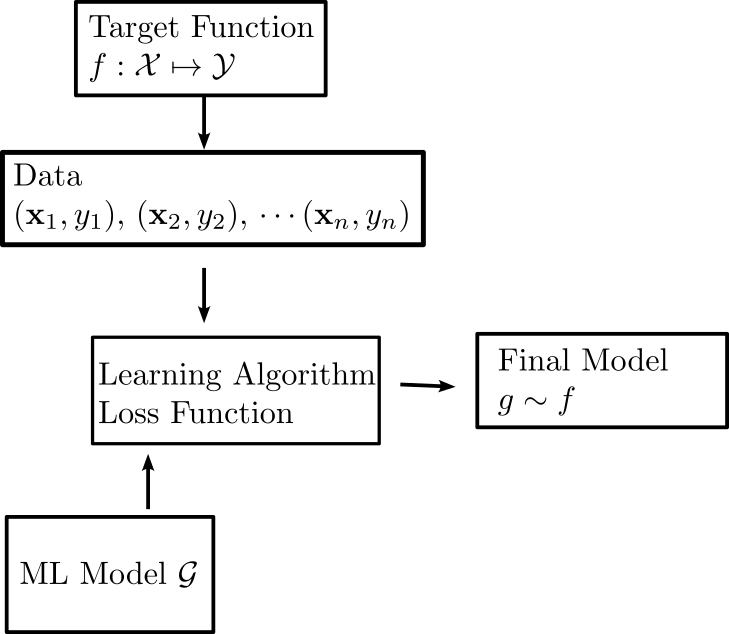

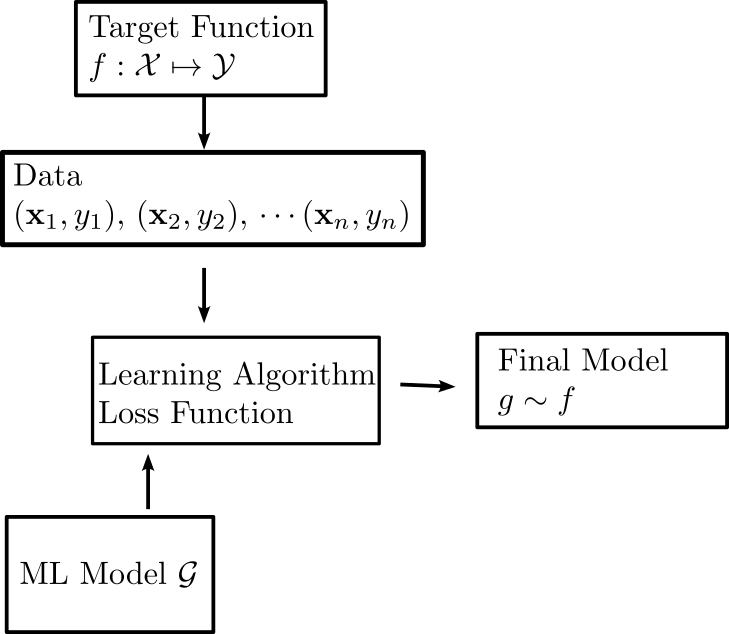

Linear Regression Revisited

- Prediction is a linear function of the features:

\[ g(\mathbf{x}) = w_1 x_1 + w_2x_2 + \cdots w_nx_n = \sum_{i=1}^n w_ix_i = \mathbf{w}^T\mathbf{x} + c \]

- Most basic and fundamental regression model

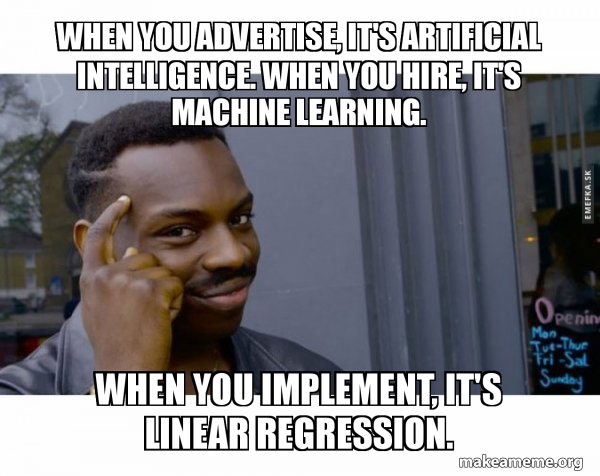

Why Cover this Again?

- Probably most common production ML model

Question

- What do you think are the advantages of linear regression?

Linear Regression Advantages

- Easier to train

- Easier to understand

- Easier to fix

Training is fast and exact

\[ \mathbf{a} = \left(\mathbf{X}^T\mathbf{X}\right)^{-1}\mathbf{X}^T \mathbf{y} \]

- Much cheaper than a neural network to run

- Can constantly update huge models on the fly on tiny hardware

Math Aside

Here \(\mathbf{X}\) is the design matrix \[ \mathbf{X} = \begin{pmatrix} x_{11} & \cdots & x_{1n} & 1 \\ \vdots & \cdots & \vdots & 1 \\ x_{m1} & \cdots & x_{mn} & 1 \end{pmatrix} \]

It is a math object with all the observations stacked in the rows

Linear algebra is math worth learning

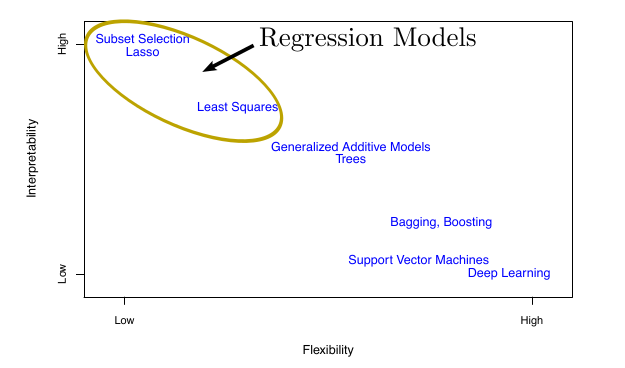

Linear Models are Interpretable

- Coefficients may tell you causal effects

- Perfect for building intuition during EDA

ISLP

Start with regression

It’s rarely a mistake to start with linear regression, even if you end up just using it as a benchmark to compare to.

Linear Regression Basics

- Features \(\mathbf{x} \in \mathcal{X}\):

- Combination of real numbers and categorical variables

Linear Regression Basics

- Input space \(y \in \mathcal{Y}\) is just real numbers

Linear Regression Basics

- Hypotheses/Models \(g\in\mathcal{G}\) are linear functions \[ g(\mathbf{x}) = w_1x_1+\cdots w_nx_n \\ = \mathbf{w}^T\mathbf{x} \]

Linear Regression Basics

- Simplest Learning Algorithm: Minimize Loss Function \[ \mathrm{min}_{\mathbf{w}} \sum_{i=1}^n (\mathbf{w}^T\mathbf{x_i}-y_i)^2 \]

Linear Regression Basics

- Maximum likelihood with Gaussian errors: \[ L(\mathbf{w}) = \prod_{i=1}^n \exp^{-\frac{(\mathbf{w}^T\mathbf{x}_i - y_i)^2}{2\sigma^2}} \]

Linear Regression Basics

- Maximum Likelihood with Gaussian \[ L(\mathbf{w}) = \prod_{i=1}^n \exp^{-\frac{(\mathbf{w}^T\mathbf{x}_i - y_i)^2}{2\sigma^2}} \]

- Question: Do you know why?

Linear Regression Basics

- Exact Formula:

\[ \mathbf{w} = (X^TX)^{-1}X^T\mathbf{y} \]

- \(X\) is called the design matrix

- Contains the data

Linear Regression Basics:

- Learning algorithm works if number of data points \(n\) is greater than number of features \(p\)

- If more features than data points, problem is

ill-posedbut we will show some tricks in a few weeks

Prediction versus Inference

- Linear regression coefficients give easy interpretation:

- \(w_i\) is increase in \(y\) for a 1 unit increase in \(x_i\)

- But this only refers to predictions, not to interventions

- Whether we care about inference or prediction will change what variables we will include

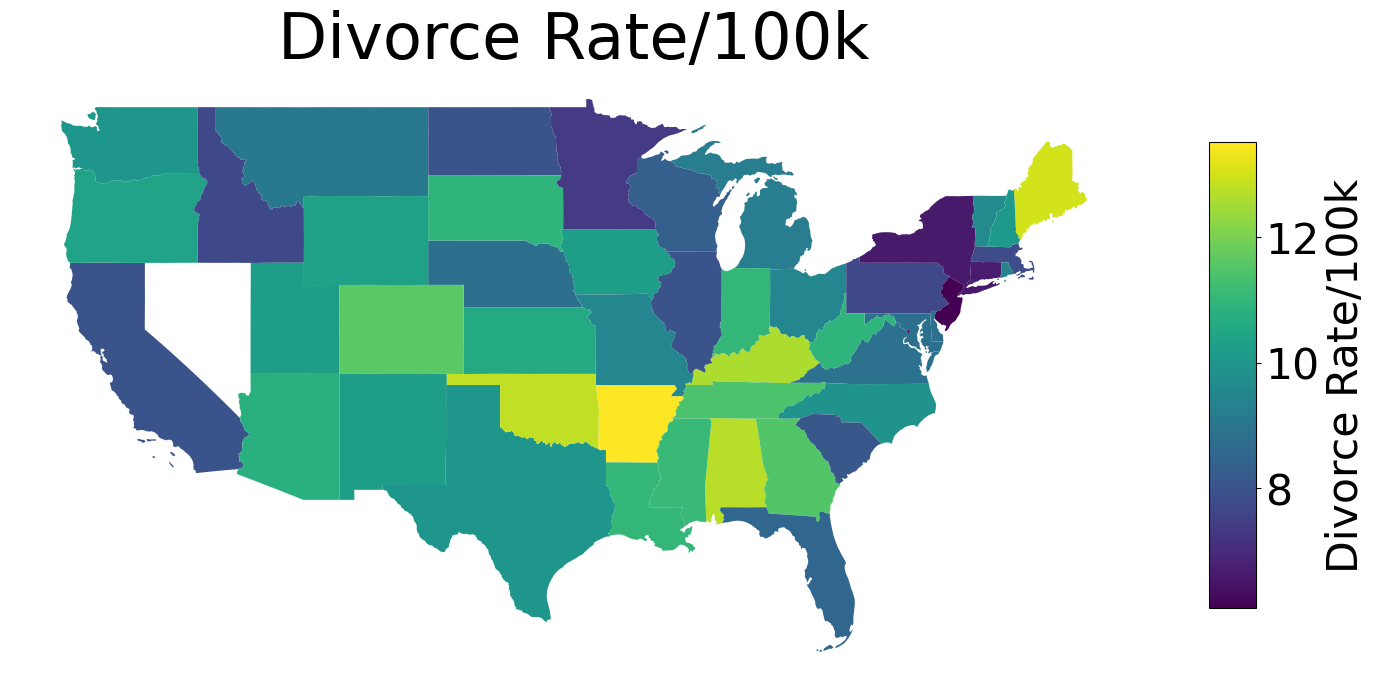

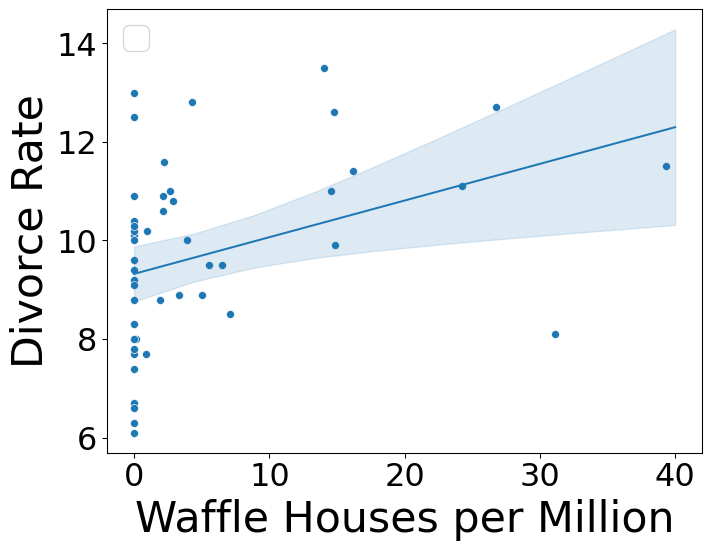

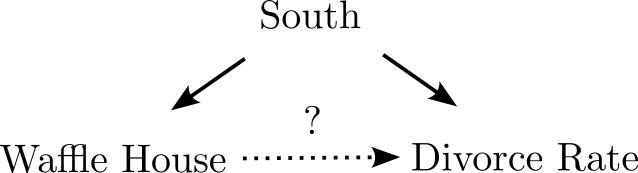

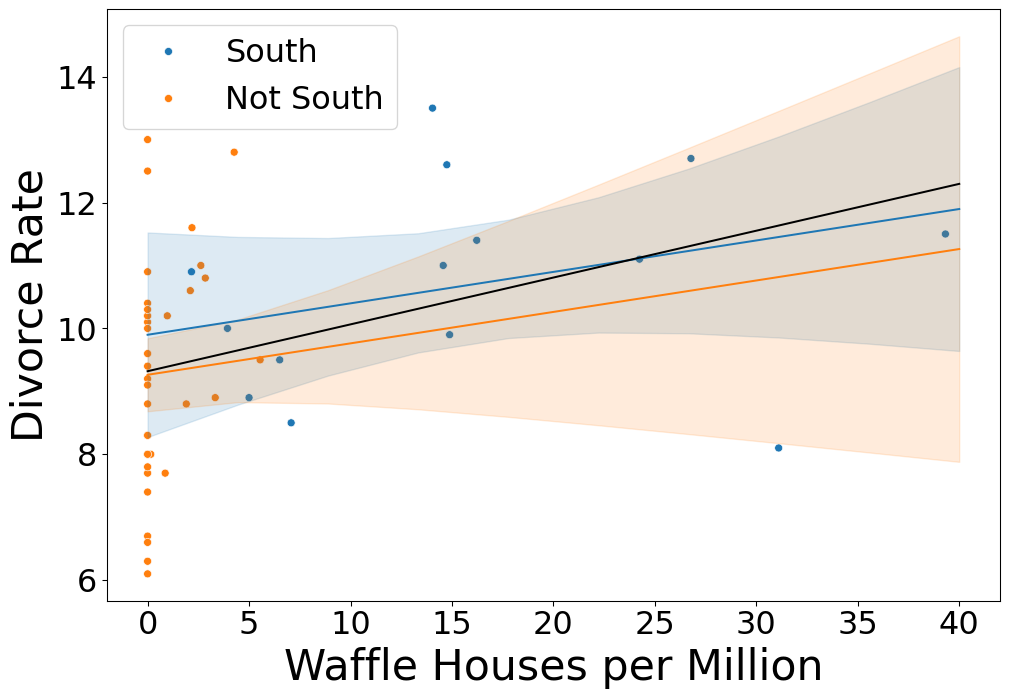

Can Waffle Houses Predict Divorce Rate?

Waffle Map

Divorce Rate Map

Waffle House Example

Confounding

Confounding

- Does anyone here believe that building waffle houses will increase the divorce rate?

- Or demolishing them will decrease it?

Confounding

- We all know Correlation does not equal Causation

- Important for linear (and other) model interpretation

Confounding

Confounding describes a situation where the predictions of a model differ from the result of experimentally manipulating the variables of that model.

- In the waffle house case, the fact that building waffle houses won’t change the divorce rate is an example of confounding.

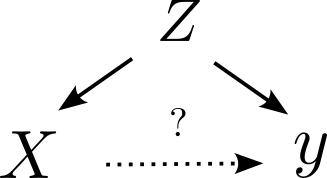

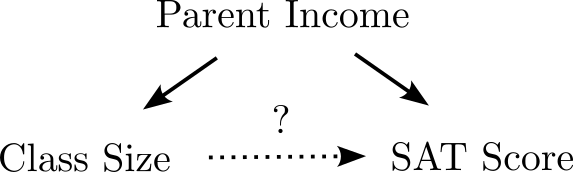

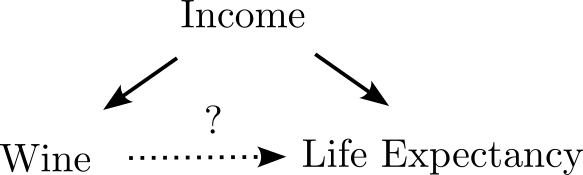

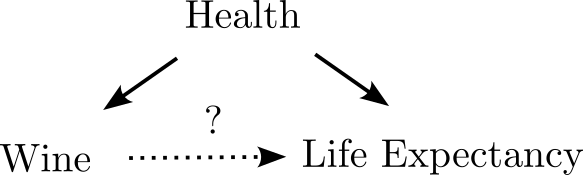

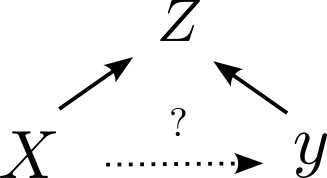

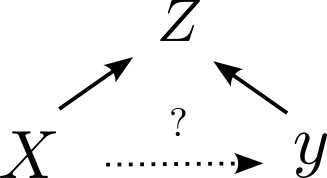

Types of Confounds: Fork

- Omitted variable impacts both dependent and independent variable.

Can you tell me an example of this?

Types of Confounds: Fork

- Omitted variable impacts both dependent and independent variable.

Types of Confounds: Fork

- Omitted variable impacts both dependent and independent variable.

Types of Confounds: Fork

- Omitted variable impacts both dependent and independent variable.

Types of Confounds: Fork

- Omitted variable impacts both dependent and independent variable.

Control for Confounds

If there is a variable you suspect is a common cause for your dependent and independent variables, you must include it in your regression for interpretability

- controlling for the confounder

- stratifying by the confounder

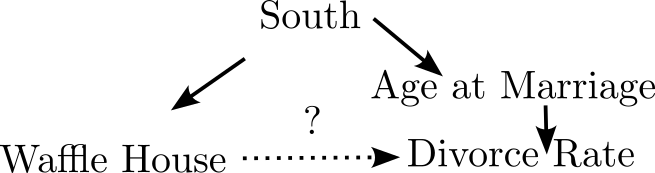

Waffle Fork

- South causes waffle house and divorce rates

Waffle Fork

- Mediated by earlier marriage age

Controlling for South

- Regression coefficient drops

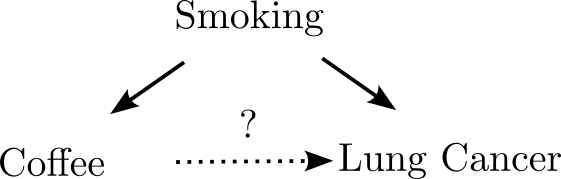

Collider Bias

Collider Bias describes a situation when the independent and dependent variable both influence a third variable which you have used in your analysis

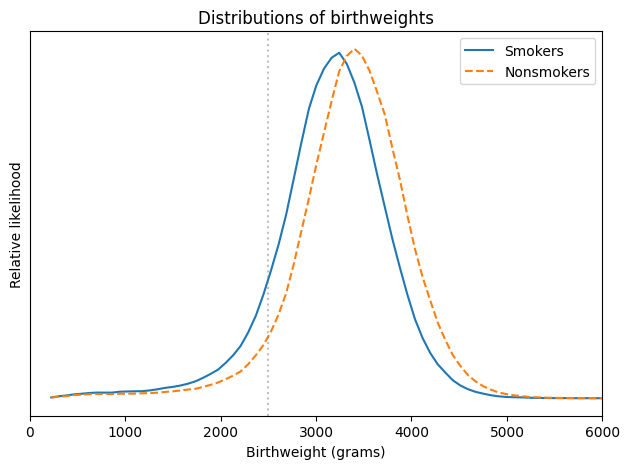

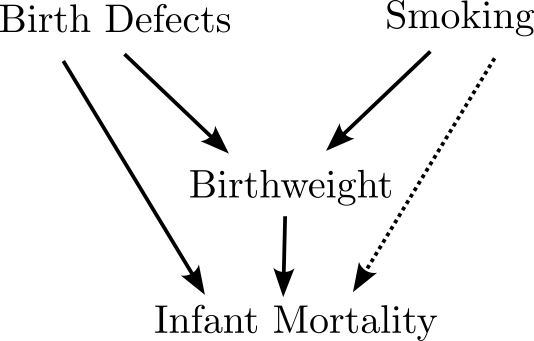

Low Birthweight paradox

- Mortality rate for low birthweight babies 25x normal weight babies

Low Birthweight Paradox

- The neonatal mortality rates for single live births of “low birth weight” were substantially and significantly lower for infants of smoking than of nonsmoking mothers.

- Paradoxical Finding:

- Mortality rate for low birthweight babies with smoking mothers was 50% lower!

- Makes it seem like smoking is protective against infant mortality

Should expectant mothers smoke?

Hint: Smokers were about twice as likely to have babies lighter than 2500 grams, which is considered “low birthweight”.

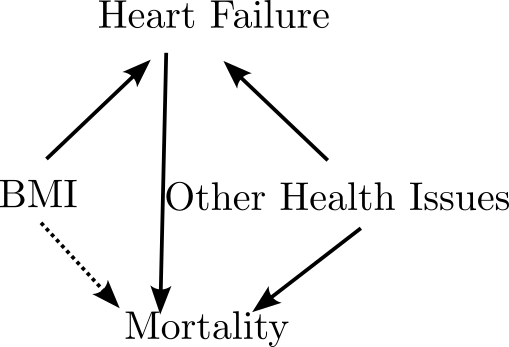

Birthweight is a Collider

Birthweight is a collider

- Both smoking and birth defects cause low birthweight

- lbw infants from mothers who smoke have fewer birth defects

- Selecting on low birthweight induces a negative correlation between smoking and infant mortality

Collider Bias

- Unlike other confounders, you must not include colliders in your regression if you care about inference!

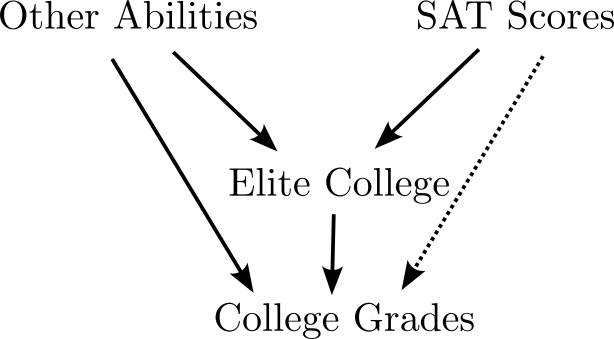

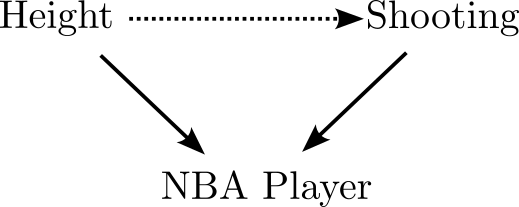

Simpson’s/Berkson’s Paradox

- This appears all over the place:

- For students already accepted to college, SAT scores don’t predict success

Simpson’s/Berkson’s Paradox

- This appears all over the place:

- Shorter NBA players are better at shooting than taller ones

Simpson’s/Berkson’s Paradox

- This appears all over the place:

- BMI is associated with survival for heart disease patients

Collinearity for Inference

- Common advice to exclude collinear predictors

- For inference, confounding is much more important and should be your primary concern

- Inference is only as good as your assumptions

Linear Models for Prediction

- Confounding concerns go out the window when your goal is accurate predictions

- Out of sample accuracy, rather than inference/interpretability

- How?

Comparing Models

- Hold Out/Cross Validation

- Save some testing data, use it to test the model

Comparing Models

- Information Criteria

- Train with all the data, use statistical theory and likelihood to estimate out of sample accuracy

Akaike Information Criterion

AIC for statistical model is: \[ AIC = n - \log(\hat{L}) \]

\(\hat{L}\) is the likelihood of the model

\(n\) is the number of parameters

This balances model complexity (\(n\)) with in-sample accuracy

Akaike Information Criterion

- There are a wide variety of other information criteria (BIC, WAIC, etc)

- They are used for comparing models

- For linear models,

AICapproximates the loss of information caused by using the model out of sample \[ KL(p,q) = \int_{X}dx p(x)(\log(p(x))-\log(q(x))) \] - Only used for model comparison

AIC Intuition

- Training sample has some noise

- Additional parameters help to reduce error, but partially fit noise

- For linear models, you can estimate the “cost” of this fit with respect to the KL divergence

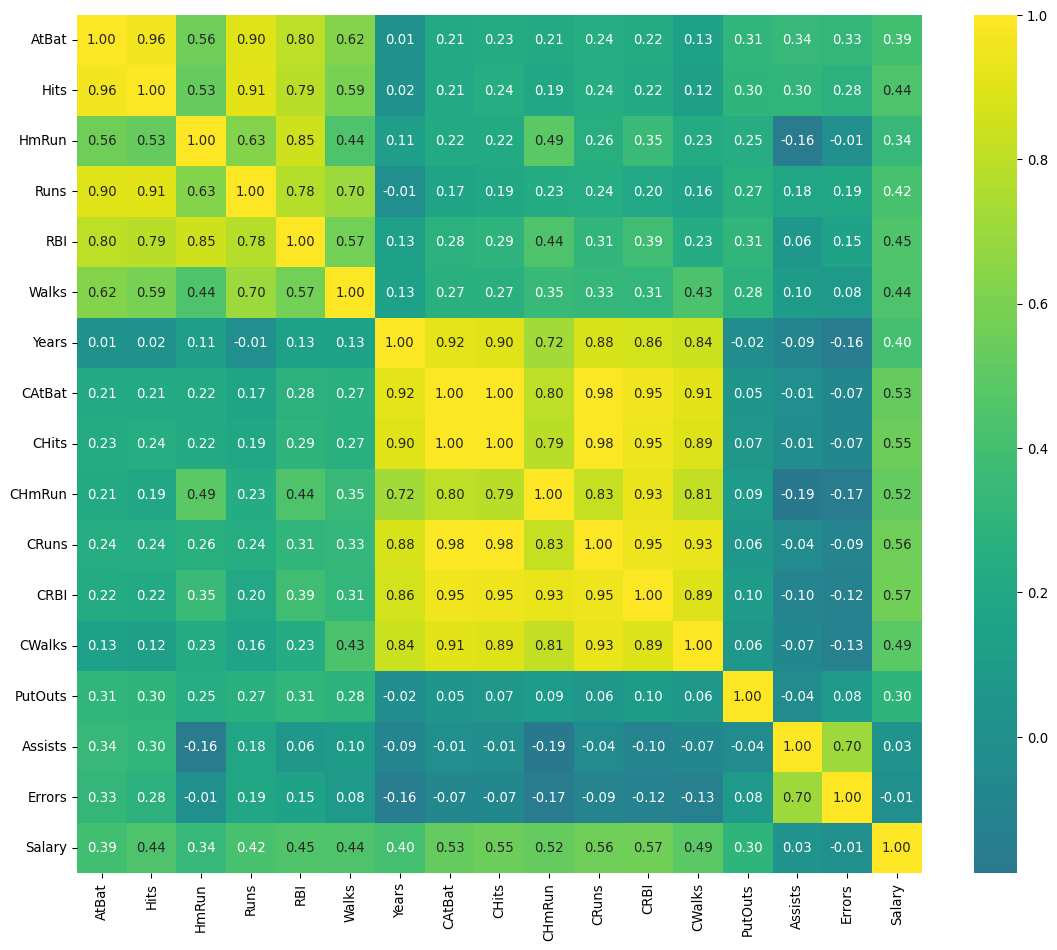

Example: Hitters Data

- Dataset that contains information on baseball players in 1987

<class 'pandas.DataFrame'>

Index: 263 entries, 1 to 321

Data columns (total 20 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 AtBat 263 non-null int64

1 Hits 263 non-null int64

2 HmRun 263 non-null int64

3 Runs 263 non-null int64

4 RBI 263 non-null int64

5 Walks 263 non-null int64

6 Years 263 non-null int64

7 CAtBat 263 non-null int64

8 CHits 263 non-null int64

9 CHmRun 263 non-null int64

10 CRuns 263 non-null int64

11 CRBI 263 non-null int64

12 CWalks 263 non-null int64

13 League 263 non-null category

14 Division 263 non-null category

15 PutOuts 263 non-null int64

16 Assists 263 non-null int64

17 Errors 263 non-null int64

18 Salary 263 non-null float64

19 NewLeague 263 non-null category

dtypes: category(3), float64(1), int64(16)

memory usage: 37.9 KBPredicting Salary

- We have a large number of variables to choose from

- What should we put in a predictive model to prevent overfitting?

CRBI 0.566966

CRuns 0.562678

CHits 0.548910

CAtBat 0.526135

CHmRun 0.524931

CWalks 0.489822

RBI 0.449457

Walks 0.443867

Hits 0.438675

Runs 0.419859

Years 0.400657

AtBat 0.394771

HmRun 0.343028

PutOuts 0.300480

Assists 0.025436

Errors 0.005401

Name: Salary, dtype: float64Collinear Predictors

Lowest AIC

- Model of intermediate complexity is best

| models | aic | |

|---|---|---|

| 1 | intelligent | 3780.465325 |

| 2 | everything | 3790.489087 |

| 0 | basic | 3859.826181 |

intelligentignored dropped several features that were irrelevant or redundant- Doesn’t have

HmRun,RBI,CHmRun

Thanks!

DATA 622